In order to accomplish your business goals, you are going to need the power of Cloud Native Development to move fast. The best businesses of today use the Cloud to accelerate every aspect of their engineering and product lifecycles. The Cloud Native Computer Foundation defines Cloud Native in the following terms

“Cloud native technologies empower organizations to build and run scalable applications in modern, dynamic environments such as public, private, and hybrid clouds.”

Taking this definition from the Cloud Native Computing Foundation, what do business leaders need to understand to have success developing Cloud Native Applications? Winning with Cloud Native Development requires mastery of

Let’s explore each Cloud Native Computing pillar in more detail.

![]()

Greenfield Technology Stack Selection

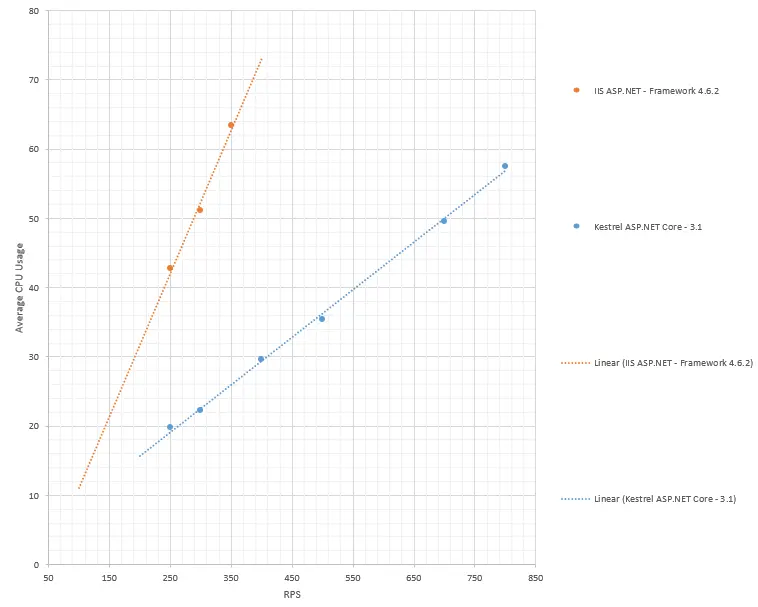

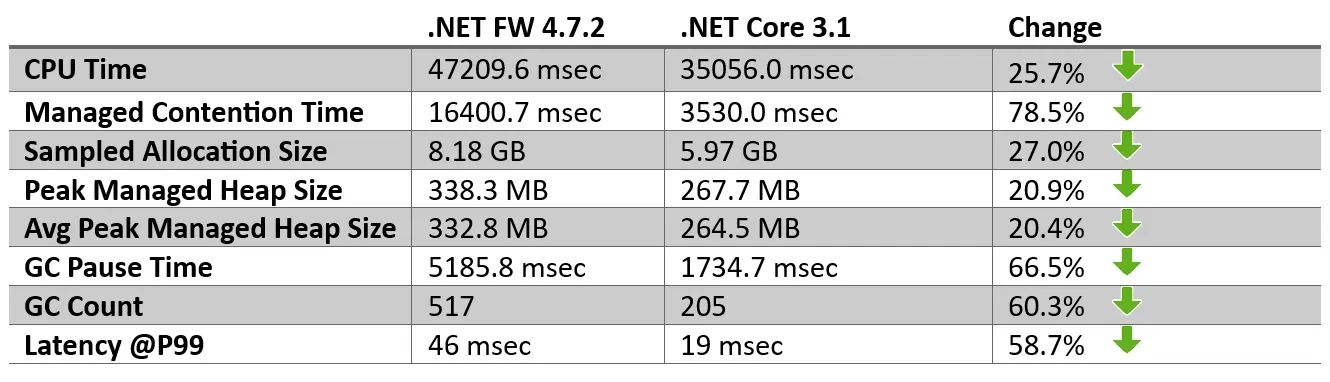

Optimizing the workloads that your business runs is a vital aspect to Cloud Native Development. In greenfield projects, selecting the proper tech stack – one that scales, supports modern best practices and enables best of class application performance is extremely important. Selecting a slow, non-performant technology stack can have massive cost implications. More often than not, the implications of a poor technology stack decision are not visible until real workloads hit the system. Be sure to perform due diligence and thorough research of the proposed technology stack. Utilize resources such as TechEmpower Web Benchmarks or build out proof-of-concept applications designed to push a proposed technology stack to its limits. As an example, take the following two Microsoft .Net technology stacks available on the Microsoft Azure or AWS cloud offerings -

- .Net Framework 4.8

- Microsoft’s Legacy Application Framework

- .Net 6 (aka DotNet Core)

- Microsoft’s Modern, Cloud Native Application Development Framework

.Net Framework 4.8 vs .Net 6

When starting a new project that is free from legacy constraints, .Net 6 should be chosen every time. Enterprises that select the legacy .Net Framework are setting themselves up for failure when moving to the cloud. The legacy .Net Framework 4.8 is going to cost far more to scale and provide engineers with a less complete set of tools to build Cloud Native Applications.

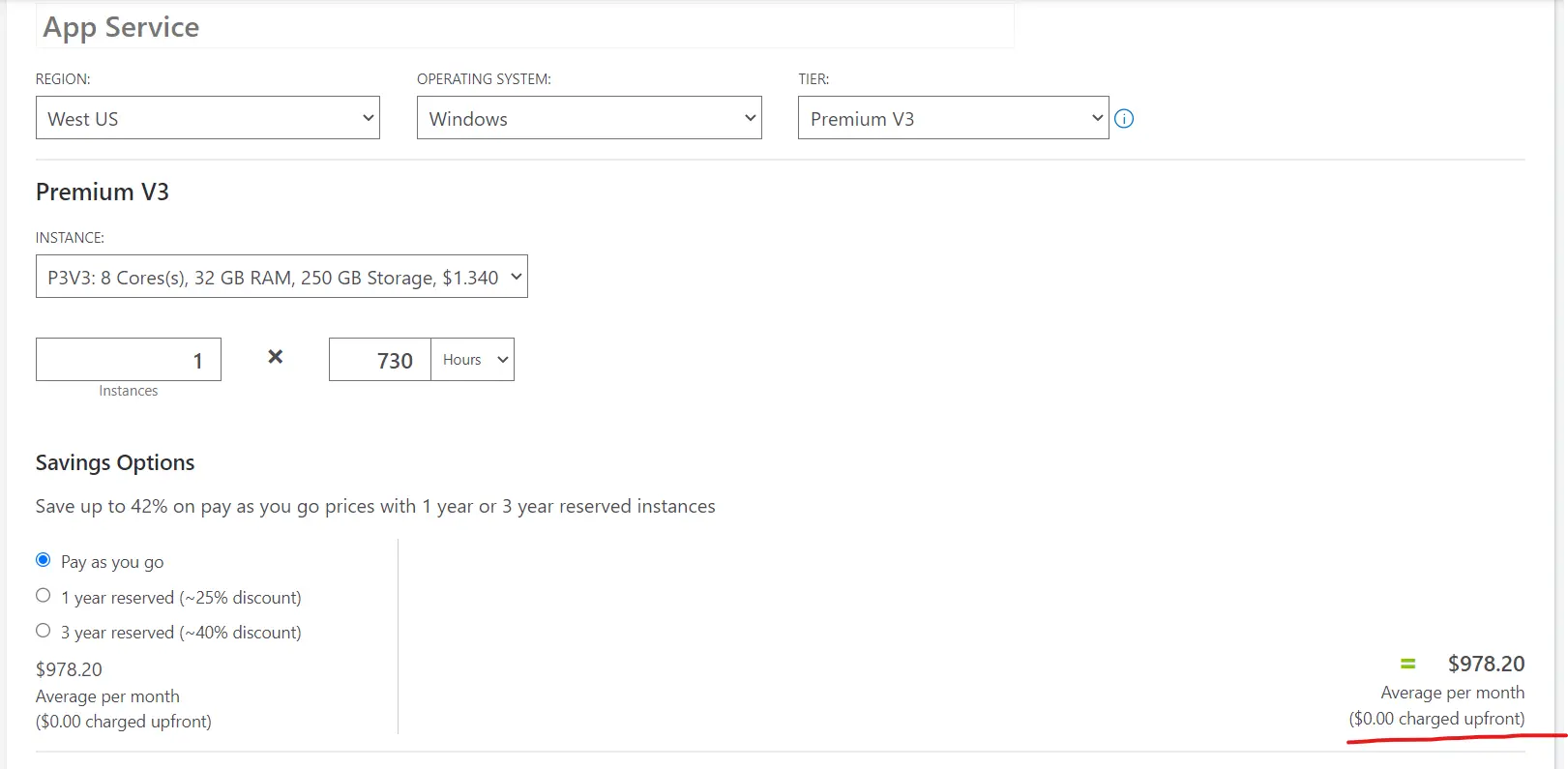

Legacy App Modernization

Many legacy applications are not ready for the cloud or may in fact be more expensive to run in Cloud Native environments than in traditional, on-premise configurations. While the business and capital costs to optimize legacy on-premise workloads may seem of low ROI, more often than not, that is not the case. Legacy apps do very well when upgraded and offer significant benefits. As your app and user base continues to grow, naturally you are able to do more when utilizing modern tech stacks. There are many indirect benefits offered by a modern technology stack such as .Net 6, as well as some often overlooked direct benefits - mainly the ability to build cross platform api’s that can provide massive cost savings when hosting your apps in Cloud Native environments. Compare the following Windows and Linux Azure App Service Plans. When you upgrade your app to .Net 6, you enable a potential 50% Azure cost savings ($978.20 vs $511.00 for West US App Service Plan). Given the tooling around .Net 6, engineers can still develop on local Windows boxes if they choose to do so and yet build a RESTful Api that runs on linux. A true win all around when upgrading your legacy app to run in a Cloud Native environment.

|

|

App Migration Success Stories

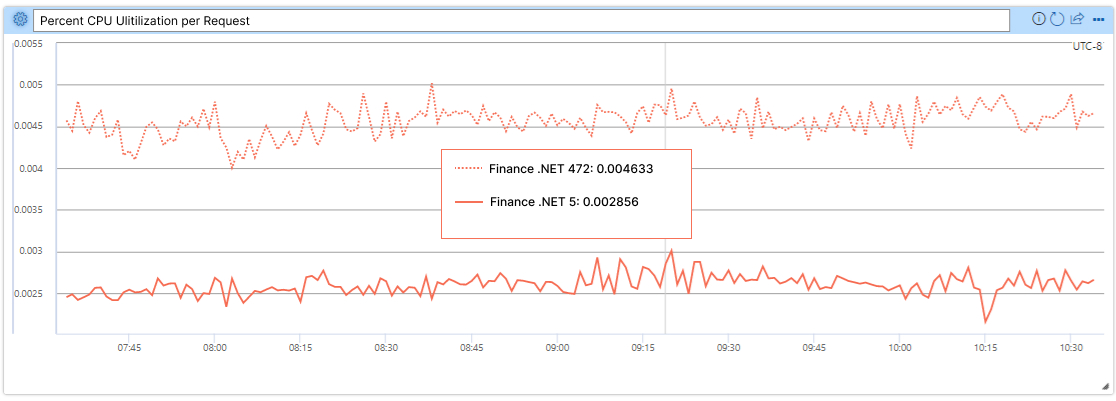

Here are a couple more examples of the benefits of migrating to a modern Cloud Native app platform. I would emphasize the reported enhancements are defined in technical details and computer science language but all performance gains can be translated into costs savings when running apps at scale in the cloud.

Microsoft Graph Team massively improved their Requests Per Second with an upgrade to DotNet Core

Exchange Online Team saw big benefits to CPU and Memory load from upgrading to DotNet Core

OneService reduced CPU Load by 50% CPU per Request after upgrading to DotNet Core

Serverless

Another one of the major trends in Cloud Native Computing is the increasing usage of Serverless compute resources. Business applications built using Serverless technologies such as AWS Lambda and Azure Functions aim to fully optimize application workloads and subsequently reduce Cloud costs. To accomplish this goal, Serverless apps give organizations the ability to

- Reduce Complexity

- Do one thing. Do it well. Do it with less.

- Abstract from Hosting Environment

- Developers shouldn’t have to know anything about the Cloud environment and should be able to run with their language of choice.

- Run on Demand

- Reduce cost by only running on demand.

|

A common misconception when beginning the journey of Serverless applications is that they require an all or nothing approach. In practice, this couldn’t be farther from the truth. Serverless will be implemented for case specific workloads and in combination with traditional Cloud technologies such as RESTful Api’s, Messaging Queues, SQL databases and noSQL databases. Email, report generation and timer-based application workloads can be great places to start reducing complexity and cost through Serverless operations. Many applications require reports, emails, etc. to be processed on cyclical schedules. In a classic on-premise environment, this would mean integrating with the host OS via operating system services such as Windows Service or SystemD, monitoring the operation of the service through logging and 3rd party tools and ensuring that the proper mechanisms are in place to restart the timing service. In a Serverless Cloud Native environment, Serverless workloads are abstracted from the OS host system and both logging and timing of the Serverless workload comes built in with many options for extension points and customization. Cloud Native engineers can return to building apps and deal far less with the intricacies of the hosting environment and sustain significant cost savings.

![]()

Select the Right Integrations to Accelerate Cloud Native Development

Cloud Native business practices must take advantage of integrations offered by their Cloud provider. While the ease at which businesses are capable of scaling is important and in their own right worthwhile of moving to Cloud Native development practices, many corporations often forget about the hundreds if not thousands of offerings from cloud providers. If we’re going to maximize Cloud Native development and ROI, these services and integrations must be taken into consideration. The most obvious cloud provider service that meets these criteria is Authentication and Authorization services but there are many more services and service categories. Cloud Native integrations occasionally occur prior to automation of services but the automation of service integration and infrastructure deployments must come shortly after successful proofs-of-concept.

Explore All Available Services

Many organizations fail to recognize the full ROI of their Cloud Native operations due to the fact that they are simply unaware of the depth and breadth of Cloud Native development services at their disposal. The two largest Cloud providers in Azure and AWS offer a combined count of 900+ services which are deployable at moment’s notice. While the reluctancy to explore new Cloud services is understandable, Cloud Native App development is designed to work in agile environments where it is cheap and easy to prove out new concepts with Cloud Services. Business leaders should exploit the ease at which they can build out new concepts and advanced their workloads into the Cloud Native world that is today’s business environment.

![]()

Effectively, all cloud providers grant their customers the ability to rent compute and storage at moment’s notice. Got a big workload that just came on and you need to manage it? No problem. In Cloud Native environments you can instantly scale to your requirements. The unfortunate corollary to instant scale is instant cost increases. In Cloud Native development

- Every CPU Cycle Counts

- Every Data Bit Stored Counts

Leaders looking to move towards Cloud Native development must get back to the basics and fully understand their data and compute costs. In a world in which data compliance is ever more important, business will be required to carry years if not decades with of data. For the majority of business leaders, focusing on data costs will yield the most cost saving benefits.

CPU and Data Costs

The key to reducing costs in Cloud Native environments is automation. Business leaders must strive to automate all aspects of their cloud services. Automation enables agility in managing data and compute. While solid DevOps is going to be necessary to keep costs down, IT and engineering leadership must drive their organizations towards Infrastructure as Code (IaC) and it’s sibling Containerization. Why are IaC and Containers so important? Taking an example from classic IT infrastructure, traditional data storage relies heavily on the hardware, either virtualized or physical. With Infrastructure as Code, best practices for agile development lifecycles become instantly viable for what is historically one of the hardest aspects of development – DevOps and management of data. When data workloads become repeatable, testable and agile, engineers are free to move fast and enhance where and how data is stored without incurring the costs of having data resources continually deployed. From a Cloud Native compute perspective, the same is also true. Engineers gain the ability to quickly test and revise compute workloads from IaC and container automation while business leaders are free to leverage the cost savings. I like to call this approach to Cloud Native development and Cloud Infrastructure “Just-In-Time Infrastructure” or JITI.

While Just-In-Time Infrastructure may seem like a pipedream for many organizations, the tooling for JITI continues to take major steps forward. Technologies in this area to keep an eye on are HashiCorps Terraform, Microsoft’s Azure Bicep and Pulumi’s Cloud Engineering Platform. Incorporating JITI practies into DevOps are yet another layer of abstraction for engineering to master but one that ultimately has massively positive implications for Cloud Native Computing and cost management.

Cloud Native Success

Today’s Cloud Native Computing landscape is the most exciting it has ever been. Through the incredible advances made by cloud providers like AWS, Azure and Google Cloud Platform, engineers and corporations have access Cloud Native Computing at a global scale of which they’ve never had before. While Cloud Native Computing Success may seem impossible, don’t lose sight that it can be broken down into Optimizations, Integrations and Cost Management. Focus on those core pillars and you will find success irregardless of the cloud provider platform that you choose.

Thanks again for reading along with me and please feel free to leave some comments below in the DISQUS chat! Have a wonderful day and happy coding.

Resources

Cloud Native Computing Foundation

Born in the Cloud, but not Absolute

Gartner Top 6 Trends Impacting Infrastructure and Operations

- cloud native development (1)

- cloud native success (1)

- cloud optimizations (1)

- legacy app modernization (1)

- serverless dotnet (1)

- .Net 6 (1)

- dotnet core (5)

- azure (1)

- aws (1)

- azure functions (1)

- aws lambda (1)

- c# (1)